Welcome to DU!

The truly grassroots left-of-center political community where regular people, not algorithms, drive the discussions and set the standards.

Join the community:

Create a free account

Support DU (and get rid of ads!):

Become a Star Member

Latest Breaking News

Editorials & Other Articles

General Discussion

The DU Lounge

All Forums

Issue Forums

Culture Forums

Alliance Forums

Region Forums

Support Forums

Help & Search

General Discussion

Related: Editorials & Other Articles, Issue Forums, Alliance Forums, Region ForumsOMFG part 2: OpenAi announces how it'll guide us in a world controlled by AI

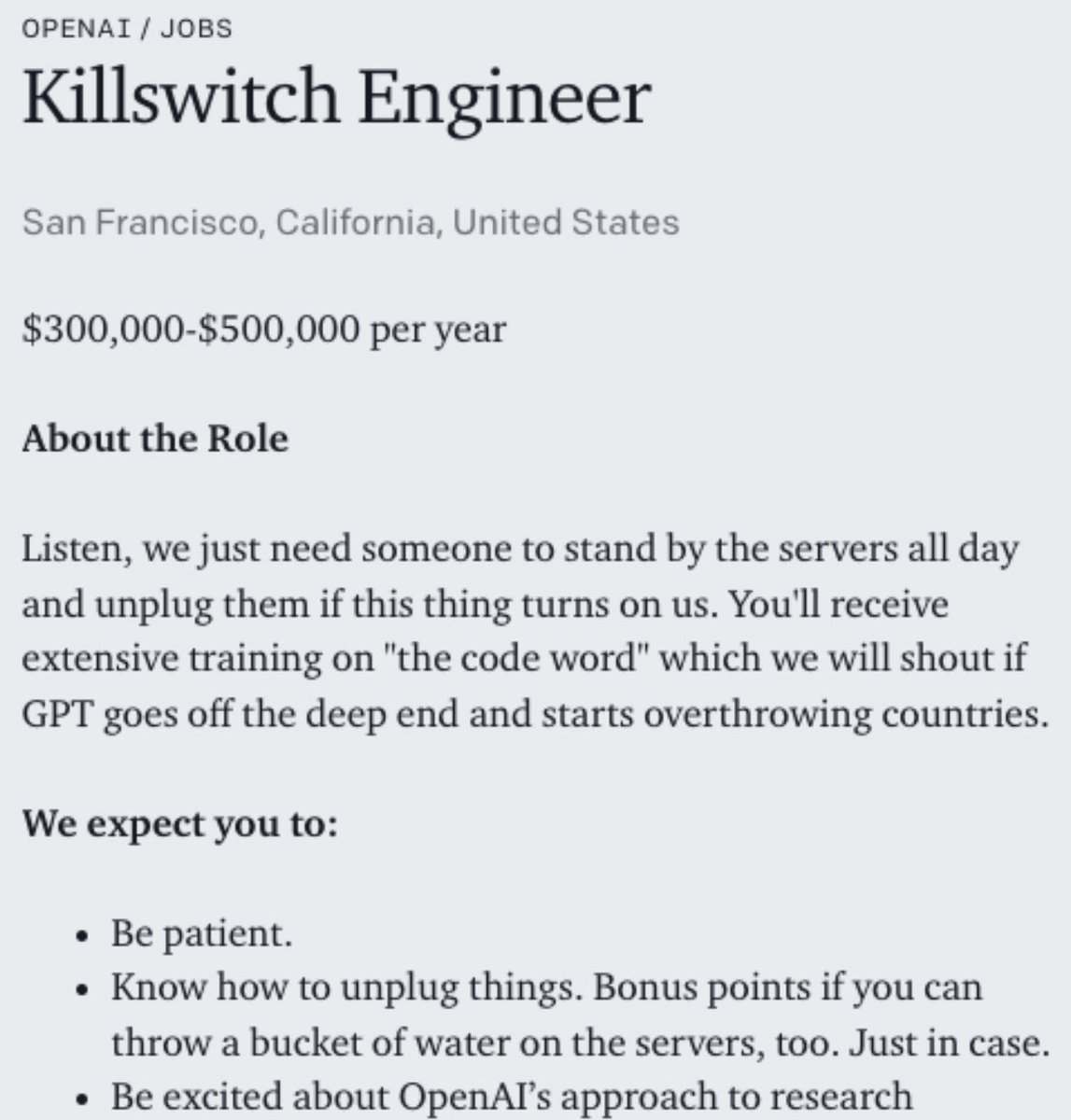

Ran across this minutes ago thanks to this cynical response from software engineer Grady Booch:

Link to tweet

That links to this new OpenAI blog, "Planning for AGI and beyond": https://openai.com/blog/planning-for-agi-and-beyond/

AGI is artificial general intelligence. See https://en.m.wikipedia.org/wiki/Artificial_general_intelligence .

From this OpenAI blog letting us know the people unleashing what they call " superintelligence" hope it will be benign but can't be sure:

-snip-

On the other hand, AGI would also come with serious risk of misuse, drastic accidents, and societal disruption. Because the upside of AGI is so great, we do not believe it is possible or desirable for society to stop its development forever; instead, society and the developers of AGI have to figure out how to get it right.[1]

-snip-

At some point, the balance between the upsides and downsides of deployments (such as empowering malicious actors, creating social and economic disruptions, and accelerating an unsafe race) could shift, in which case we would significantly change our plans around continuous deployment.

-snip-

The first AGI will be just a point along the continuum of intelligence. We think it’s likely that progress will continue from there, possibly sustaining the rate of progress we’ve seen over the past decade for a long period of time. If this is true, the world could become extremely different from how it is today, and the risks could be extraordinary. A misaligned superintelligent AGI could cause grievous harm to the world; an autocratic regime with a decisive superintelligence lead could do that too.

-snip-

Successfully transitioning to a world with superintelligence is perhaps the most important—and hopeful, and scary—project in human history. Success is far from guaranteed, and the stakes (boundless downside and boundless upside) will hopefully unite all of us

-snip-

On the other hand, AGI would also come with serious risk of misuse, drastic accidents, and societal disruption. Because the upside of AGI is so great, we do not believe it is possible or desirable for society to stop its development forever; instead, society and the developers of AGI have to figure out how to get it right.[1]

-snip-

At some point, the balance between the upsides and downsides of deployments (such as empowering malicious actors, creating social and economic disruptions, and accelerating an unsafe race) could shift, in which case we would significantly change our plans around continuous deployment.

-snip-

The first AGI will be just a point along the continuum of intelligence. We think it’s likely that progress will continue from there, possibly sustaining the rate of progress we’ve seen over the past decade for a long period of time. If this is true, the world could become extremely different from how it is today, and the risks could be extraordinary. A misaligned superintelligent AGI could cause grievous harm to the world; an autocratic regime with a decisive superintelligence lead could do that too.

-snip-

Successfully transitioning to a world with superintelligence is perhaps the most important—and hopeful, and scary—project in human history. Success is far from guaranteed, and the stakes (boundless downside and boundless upside) will hopefully unite all of us

-snip-

Feeling reassured that the same people who unleashed a hallucinating chatbot it can't stop from hallucinating - that gave insane responses paired with the Bing search engine until it was gagged by being limited to short answers with lots of subjects off limits - will see us safely through a transition to AI superintelligence they feel they have to unleash?

EDITING to link to my earlier OMFG post on OpenAI's batshit "educator considerations for ChatGPT: https://www.democraticunderground.com/100217677504

6 replies

= new reply since forum marked as read

Highlight:

NoneDon't highlight anything

5 newestHighlight 5 most recent replies

= new reply since forum marked as read

Highlight:

NoneDon't highlight anything

5 newestHighlight 5 most recent replies

OMFG part 2: OpenAi announces how it'll guide us in a world controlled by AI (Original Post)

highplainsdem

Feb 2023

OP

highplainsdem

(62,953 posts)1. Another response from Grady Booch:

live love laugh

(16,458 posts)2. We can't even stop non-AI hackers so this is disastrous.

highplainsdem

(62,953 posts)4. I wish hackers were all we had to worry about.

highplainsdem

(62,953 posts)3. And now Grady just posted something I've seen others posting:

crickets

(26,168 posts)5. I do not welcome our would-be AI overlords. Big nope.

Go back to the drawing board, people. Start with rules on what is and is not acceptable behavior from your scary little toys, then get back to us when you've figured out how to enforce them.

highplainsdem

(62,953 posts)6. Just found a detailed response to this idiocy from OpenAI, thanks to

a retweet from Grady Booch.

The thread is from Emily Bender (see her profile on Twitter, and https://wikipedia.org/wiki/Emily_M._Bender ) and starts with the tweet below, Thread Reader page here: https://threadreaderapp.com/thread/1629708863699308544.html .

Link to tweet

A few of her comments to OpenAI:

Paraphrasing: "Our experts thought we could do this as a non-profit, but then we realized we wanted MOAR MONEY. Also we thought we should just do everything open source but then we decided nah. Also, can't be bothered to even document the systems or datasets."

-snip-

The problem isn't regulating "AI" or future "AGI". It's protecting individuals from corporate and government overreach using "AI" to cut costs and or deflect accountability.

-snip-

And, more to the pt: There are harms NOW: to privacy, theft of creative output, harms to our information ecosystems, and harms from the scaled reproduction of biases. An org that cared about "benefitting humanity" wouldn't be developing/disseminating tech that does those things.

No, they don't want to address actual problems in the actual world (which would require ceding power). They want to believe themselves gods who can not only create a "superintelligence" but have the beneficence to do so in a way that is "aligned" with humanity.

-snip-

The problem isn't regulating "AI" or future "AGI". It's protecting individuals from corporate and government overreach using "AI" to cut costs and or deflect accountability.

-snip-

And, more to the pt: There are harms NOW: to privacy, theft of creative output, harms to our information ecosystems, and harms from the scaled reproduction of biases. An org that cared about "benefitting humanity" wouldn't be developing/disseminating tech that does those things.

No, they don't want to address actual problems in the actual world (which would require ceding power). They want to believe themselves gods who can not only create a "superintelligence" but have the beneficence to do so in a way that is "aligned" with humanity.