NNadir

NNadir's JournalIngenuity Dies.

I apologize in advance; I just couldn't resist titling this post on a note in my Nature Briefing feed this way. What it's about:

First aircraft to fly on Mars dies — but leaves a legacy of science

Subtitle:

Excerpt:

The helicopter, a box-shaped drone with a pair of 1.2-metre-long carbon-fibre blades, was a trailblazer for interplanetary spacecraft. NASA’s Jet Propulsion Laboratory (JPL) in Pasadena, California, built it as a test to see whether powered flight was possible in the thin atmospheres of other worlds. It accompanied NASA’s Perseverance rover to Mars, where both landed in February 2021 and began studying Jezero.

“Ingenuity absolutely shattered our paradigm of exploration by introducing this new dimension of aerial mobility,” says Lori Glaze, head of NASA’s planetary sciences division in Washington DC.

Ingenuity was supposed to make only five flights and last about a month, but ultimately it traversed 17 kilometres of the red planet and flew for a total of nearly 129 minutes between 2021 and 2024. During its final journey, something fatal happened — perhaps the rotor blades striking the ground, NASA announced on 25 January. An image that the helicopter took of the ground after the flight ended shows the shadow of one of the blades, with at least one-quarter of it missing. The helicopter can still communicate with Earth, at least for now, but it will not fly again...

Plutonium powered...

Dana-Farber retractions: meet the blogger who spotted problems in dozens of cancer papers

From my Nature Briefing news feed:

Dana-Farber retractions: meet the blogger who spotted problems in dozens of cancer papers

Subtitle:

I'm not sure if the news item is open source; hopefully it is. An excerpt:

In the papers, published in a range of journals including Blood, Cell and Nature Immunology, David found images from western blots — a common test for detecting proteins in biological samples — in which bands seemed to be spliced, stretched and copied and pasted. He also found images of mice duplicated across figures where they shouldn’t have been. (Nature’s news team is editorially independent of its publisher, Springer Nature, and of other Nature-branded journals.)

It was not the first time that some of these irregularities had been noted; some were flagged years ago on PubPeer, a website where researchers comment on and critique scientific papers. The student newspaper The Harvard Crimson reported on David’s findings on 12 January.

The DFCI, an affiliate of Harvard University, had already been investigating some of the papers in question before David’s blogpost was published, says the centre’s research-integrity officer, Barrett Rollins. “Correcting the scientific record is a common practice of institutions with strong research-integrity processes,” he adds. (Rollins is a co-author of three of the papers that David flagged and is not involved in investigations into them, says DFCI spokesperson Ellen Berlin.) The DFCI is declining requests for interviews with its researchers about the retractions.

David, based in Pontypridd, UK, spoke to Nature about how he uncovered the data irregularities at the DFCI and what scientists can do to prevent mix-ups in their own work...

There are still tons upon tons of Western Blots published and I was involved just yesterday in explaining to a client why Mass Spec, as expensive and sometimes challenging it is, should become the world standard for proteomic analysis, which, of course, is happening without any input from me. Yes, you can get something, even a lot, out of a Western Blot, but there are many ways to be fooled or to deliberately fool.

A Study of the Extraction of Plutonium and Neptunium into Ionic Liquids.

The paper to which I'll refer is this one: Sequestration of Np(IV) and Pu(IV) with Hexa-n-octyl nitrilotriacetamide (HONTA) in an Ionic Liquid: Unusual Species vis-à-vis a Molecular Diluent Rajesh B. Gujar, Bholanath Mahanty, Seraj A. Ansari, Richard J. M. Egberink, Jurriaan Huskens, Willem Verboom, and Prasanta K. Mohapatra Industrial & Engineering Chemistry Research 2024 63 (1), 480-488.

Regrettably I won't have very much time to spend discussing this paper but will endeavor to make a few points about this critical task, chemical separations of the components of used nuclear fuel to extract all of the valuable components, not the least of which are the transuranium actinides, neptunium, plutonium and americium. The paper is about the first two of these. To my mind it is of critical importance to exploit all of the actinides that can be obtained from used nuclear fuel. In particular, neptunium, and to a lesser extent americium, have important properties that will allow for the irreversible denaturation of weapons grade plutonium in any successful nuclear weapons disarmament program.

Let me preface the excerpting by saying I am not really a solvent extraction kind of guy, although I am very much interested in the properties of nuclear materials, especially the actinides, in ionic liquids, which have wide electrochemical windows and are not subject to flammability and to vapor pressures. Still the paper is interesting, nice to see. I certainly agree emphatically with the first sentences in the opening paragraphs. From the introduction:

In the quest for clean energy with low carbon emissions, nuclear energy is considered to be one of the most viable options and its contribution to the world energy demand is going to grow at a much rapid rate. (1?6) Though nuclear energy appears quite attractive, its sustainability can be achieved with a closed fuel cycle, which involves reprocessing of the spent nuclear fuel. (7?10) Furthermore, the global acceptance of nuclear energy is dependent on the efficiency at which large volumes of highly radioactive wastes are processed. The reprocessing of spent nuclear fuel involves the “PUREX” (Plutonium Uranium Redox Extraction) process that uses tri-n-butyl phosphate (TBP) in a paraffinic diluent as the solvent. (11) Though TBP is the work horse of the nuclear industry for over half a century, there are several drawbacks, namely, significant aqueous solubility, poor radiolytic stability, deleterious nature of its degradation products, and generation of large volumes of solid wastes, which triggered the research on the development of alternative solvent systems that can be considered as “green”, i.e., with C, H, O, and N type extractants. (12,13) These efforts, started in the sixties of the last century, (14?16) have suggested that dialkyl amides are very good alternatives to TBP due to their complete incinerability and innocuous nature of their degradation products. Several researchers have evaluated many such dialkyl amides and concluded that these extractants are much more efficient than TBP under certain conditions. (17?19) Though plant-scale applications are lacking, there is a good possibility that the dialkyl amides can replace TBP in the near future at least for fuels containing a high Pu content. (20?22)

Cooperative complexation hypothesis suggests that multifunctional ligands can be far more efficient than ligands containing one ligating moiety. (23) Reports by Sasaki et al. have suggested that tripodal amide ligands such as N,N,N?,N?,N?,N?-hexa-n-octyl nitrilotriacetamide (HONTA) is one of the most efficient extractants for the extraction of actinide ions such as UO22+ and Pu4+ from nitric acid feeds. (24) The authors have also reported selective extraction of trivalent actinides vis-à-vis the trivalent lanthanides due to binding of the “soft” actinide ion to the soft donor “N” atom making the ligand tetradentate. (25,26) Other research laboratories have also studied the extraction behavior of HONTA and its homologs with varying alkyl chains with some very interesting results. (27?29) Though Np is not considered important in the PUREX process, as it escapes to the raffinate stream, its chemistry needs to be understood when amide-based extractants are used for spent fuel reprocessing. In this context, one needs to study the extraction behavior of Np, mainly in its +4 oxidation state with HONTA as well. To the best of our knowledge, a detailed understanding of this is not reported to date by any research group.

The studies involving HONTA, reported so far, utilized molecular diluents such as n-dodecane or its mixture with iso-decanol. In recent years, room temperature ionic liquids (termed as RTILs or simply ILs), a class of neoteric diluents, have been suggested as alternative “green” diluents due to properties like nonflammability, wide electrochemical window, higher radiolytic stability and benign nature. (30?34) RTILs are known to extract metal ions much more efficiently than the molecular diluents to the extent that in certain cases the D (distribution ratio) values are reported to be 3–4 orders larger than those obtained with molecular diluents. (35) There is no report available where HONTA has been used for the extraction of tetravalent actinide ions with RTIL-based diluents. This work deals with the use of both a molecular diluent and an ionic liquid for the extraction of the Np(IV) ion from an aqueous nitric acid medium. A comparison is done with the extraction behavior of Pu(IV) ion as well. Apart from the liquid–liquid extraction studies, cyclic voltammetric studies were also carried out to understand the redox behavior in the RTIL medium...

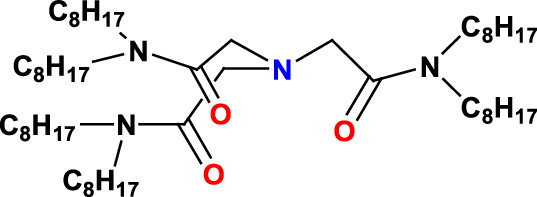

The structure of HONTA:

The ionic liquid used - there are many thousands of ionic liquids possible, along with the related deep eutectic solvents - is the well known and often discussed 1-butyl-3-methyl imidazolium bis(trifluoromethane sulfonyl)imide (Bumim·Tf2N). The paper discusses the electrochemical utility of this system by running cyclic voltammetry, a process that allows for the reduction and oxidation of metals - much as is done in batteries - to change their chemical properties. This particular work involves nitric acid solutions, and thus reduction of neptunium to the metal cannot be carried out, nor is their any reason to suspect the authors were interested in doing so.

The cyclic voltammogram and UV/VIs spectra:

The caption:

Personally I am very interested in the metallic forms of plutonium and neptunium, since they form together a low melting eutectic that suggests use of a very unusual fuel system in which I am trying to interest my son. (He says, "Dad, graduate students are unlikely to get to play with liquid plutonium! It's certainly not the focus of his lab groups, but before I die I want to leave this in the back of his mind.) I'm hoping that he'll realize his goal of working in a national laboratory and will weasel his way into getting to play with liquid plutonium and its alloys.

As a fuel, a metallic liquid plutonium/nepturnium alloy would allow for the nearly instantaneous and pretty much irreversible denaturation of weapons grade plutonium into a form of plutonium that is totally unacceptable for use in nuclear weapons.

Anyway, the authors find that the nature of the HONTA complexing agent is completely different in ionic liquids than it is in traditional molecular solvents, explored in this case with n-decane and isodecane. This is unsurprising.

Have a nice day tomorrow.

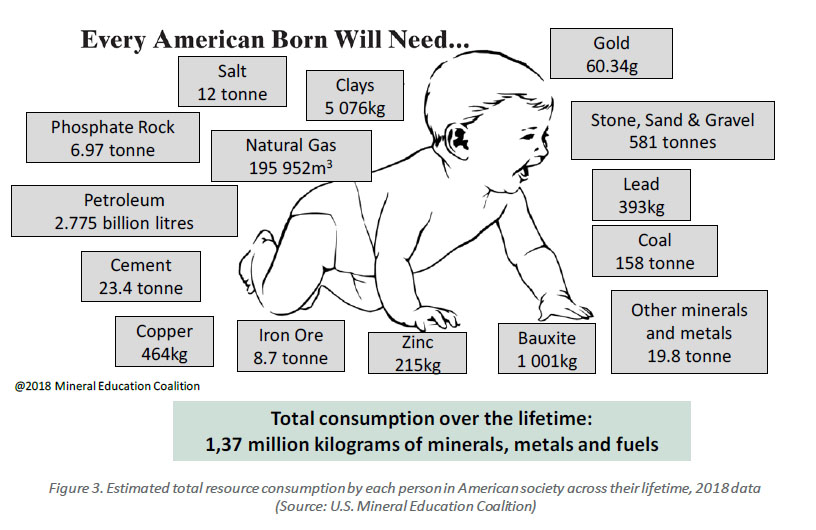

Every American Baby Will Need...

Source: The Mining of Minerals and the Limits to Growth

(I know I shouldn't post here, but I couldn't help myself.)

Have a happy hydrogen/electric "green" car day.

A Danish University Moves Toward a Nuclear Engineering Curriculum.

Danish university to create new nuclear research centreSubtitle:

Excerpts:

Under the leadership of Bent Lauritzen, a senior researcher at DTU Physics, the new centre - to be named DTU Nuclear Energy Technology - will strengthen the collaboration between relevant research environments, currently located at the departments 0f DTU Physics, DTU Energy, DTU Chemistry and DTU Construct...

...The purpose of the new centre will be to: attract and support academic talent to strengthen research in nuclear energy technologies; expand capacities for teaching and supervision of students, including PhD students; create experimental facilities for such areas as characterisation of materials and simulation of new reactor technologies; and strengthen collaboration with Danish and international companies.

"The climate crisis has reached an extent that makes it crucial that we research all technologies that may be relevant in phasing out fossil energy sources," said DTU President Anders Bjarklev. "Regardless of whether nuclear power has a future in Denmark, it is important for DTU to have research in the field because we have an obligation to contribute research-based knowledge to society and our students. Our ambition with the creation of the new centre is to strengthen the part of the research that is specifically aimed at nuclear energy technologies..."

There are some Danes who get it.

The unknown Jewish engineer behind Hitler's vaunted Volkswagen Beetle

The unknown Jewish engineer behind Hitler’s vaunted Volkswagen BeetleSubtitle:

By Rich Tenorio, May 19, 2018.

Yet experts say the Nazis omitted a Jewish voice from the creation story: Josef Ganz, a German-Jewish engineer and journalist.

A renowned automotive authority, Ganz developed many concepts incorporated into the Beetle. But he was threatened with assassination, forced into exile and forgotten by history, dying of a heart attack at age 59 in Australia in 1967. Meanwhile, Beetles rolled off postwar assembly lines until 2003, becoming the best-selling car ever...

...The Jewish ‘people’s car’

Born in Budapest in 1908, Ganz became what Schilperoord called a central figure of the German auto industry in the late 1920s and early 1930s.

A mechanical engineer, Ganz was also editor-in-chief of the magazine Motor-Kritik — “the most influential magazine in the German auto industry and also abroad,” Schilperoord said. “He really analyzed cars, and inventions related to cars, with a lot of technical know-how. It was not really done by anyone else...”

I got around to eating some of the pistachios Santa put in my stocking.

You know, I have no religion whatsoever, but pistachios make me want to believe in a benign and magnificent God, a nice lady with a marvelous inclination to spread joy and happiness.

If I'm required to believe in Santa next year to get more pistachios, well then, I'll be baking cookies and putting out carrots for the Reindeer late next December if I'm still alive.

CO2 Hydration Conditions during Hydrogen Evolution and Carbon Dioxide Reduction Gas Diffusion Electrode Reactions

The paper to which I'll refer is this one: Is Hydroxide Just Hydroxide? Unidentical CO2 Hydration Conditions during Hydrogen Evolution and Carbon Dioxide Reduction in Zero-Gap Gas Diffusion Electrode Reactors Henrik Haspel and Jorge Gascon ACS Applied Energy Materials 2021 4 (8), 8506-8516.

As I often note, electricity is a thermodynamically degraded form of energy, and, as such, despite the common public misapprehension that it is "green," perhaps driven by familiarity and a large dollop of wishful thinking, it is dirty. The relationship between thermodynamics and environmental cost is more or less direct; any system with poor thermodynamics will require the generation of more energy than necessary. The generation of electricity wastes energy, even without further waste by the storage of electricity in batteries, or worse, hydrogen.

Electricity production on this planet, as is the case with all forms of energy, remains increasingly dependent on combustion, overwhelmingly the combustion of dangerous fossil fuels.

It follows that the electrochemical reduction of CO2 using electricity, just like the electrochemical reduction of water to make hydrogen will be intrinsically involved with exergy destruction, that is, poor thermodynamics.

There is an important caveat to the above statement; electricity can represent a thermodynamically improvement where it is a side product which recovers exergy by the transformation of waste heat.

Consider the Sulfur Iodine Cycle, a process for the thermochemical decomposition of water into hydrogen and oxygen without the use of thermodynamically degraded electricity. In this process, H2SO4, sulfuric acid is decomposed into SO2 gas and O2 gas and steam H2O at high temperatures, on the order of 1000°C. Some of this heat can be recovered for another reaction in the cycle, the decomposition of hydrogen iodide (HI) gas into H2 and I2 gases, but this step takes place at a much lower temperature than the decomposition of hydrogen iodide gas. In addition, at high temperatures, the products of sulfuric acid decomposition can recombine to result in the reverse reaction, the formation of sulfuric acid, to take place.

Thus it is useful to consider cooling the mixture SO2 gas and O2 gas and steam H2O rapidly to a temperature at which SO2 gas. The critical temperature, the highest temperature at which a gas can be liquified, of SO2, Tc gas is 430.3K, or 147.1°C, well above the boiling point of water, suggesting one could recover exergy by placing a steam engine in the stream. This case is a simplification, and for various reasons there are better approaches for exergy recovery from the sulfur iodine cycle, but it is illustrative. The point is that in producing chemical energy, that represented by hydrogen, which can be used for the synthesis of portable fuels, one can recover electricity as a side product, rather than rejecting the heat to the atmosphere.

I do not expect energy sanity to emerge in the future, since popular thinking is either reactionary or conservative but I do concede that energy sanity is feasible, if not likely.

The paper referenced at the outset of this post is about the electrochemical reduction of carbon dioxide, a much studied concept. The issue of carbon dioxide utilization can often be subject to appeal by people trying to greenwash fossil fuels, as is the case with people trying to rebrand fossil fuels as hydrogen, here at DU and elsewhere. Nonetheless, although the thermodynamics of the reduction of carbon dioxide requires that we not only produce all of the energy that put it there, the enthalpy, we must also produce additional energy to overcome the entropy of mixing. I make this point repeatedly. Often, as is the case with people selling fossil fuels by attempting to rebrand them as "hydrogen" this sort of thing, similar to the nonviable and unpracticed scam of carbon dioxide sequestration, appeal to these ideas is to greenwash fossil fuels. Nevertheless, so far as direct air capture, or better, capture via the intermediate of seawater, is concerned, I think it is important, to use the cliche, "not to throw the baby out with with the bathwater."

The question to my mind with respect to direct air (or seawater) capture and reduction (utilization) is "Do we have any choice but to do otherwise?"

For direct air capture, I often consider strong bases, among the strongest being the aqueous hydroxides of rubidium and cesium, both of which are fission products, with cesium having the additional benefit of being highly radioactive, and thus capable of mineralizing extremely difficult to destroy pollutants, notably those having carbon fluorine bonds.

Thus, considering the feasibility of the case - not likely, but feasible - where electricity is a side product rather than the main product of the production of heat, the paper caught my eye, as I was poking around for the kinetics of the hydration of carbon dioxide.

From the introduction:

All the three basic CO2RR flow cell configurations, namely, (1) zero-gap or membrane electrode assembly (MEA)-type, (2) three-compartment liquid catholyte type, and (3) microfluidic electrolyzers, have their own advantages and disadvantages. (4,5,11) Despite the well-defined cathode environment in a liquid catholyte cell, achieving high energy efficiency is hindered by the relatively high Ohmic resistance of the cell. Moreover, stable cell operation needs continuous control of the pressure difference between the CO2 stream and the liquid cathode compartment. By pressing the cathode and anode GDEs directly against the AEM, an MEA is formed. (12) The main advantage of a gas-fed MEA-type or zero-gap electrolyzer is the lower operating cell voltage due to the absence of a catholyte stream and the corresponding lower Ohmic losses. (11,13) However, this poses a further challenge in developing a molecular-level understanding of the ongoing processes due to the ill-defined local environment.

On the other hand, anionic CO2 electroreduction products can cross AEMs (14) along with a substantial CO2 loss in the form of HCO3– and CO32– as CO2 reacts with OH– ions either generated in CO2RR or already present in the electrolyte. (15) An AEM acts as a “CO2 pump;” one or two CO2 molecules are liberated at the anode when carbonate or bicarbonate ions pass through the membrane, respectively. (15) The so-called “carbonation process,” that is, the coexistence of OH–, HCO3–, and CO32–, is a present challenge to be solved in AEM fuel cells. (16,17) In flow cell CO2 electrolysis, carbonation is the main source of the low CO2 utilization efficiency. (18) Up to 70% of the reactant feed is captured under basic conditions and later released in the form of gaseous CO2 at the anode. (19?21) Moreover, carbonate/bicarbonate salt precipitates at the cathode in alkaline electrolyzers, resulting in activity loss and long-term stability issues in high current electrolysis. (22,23)

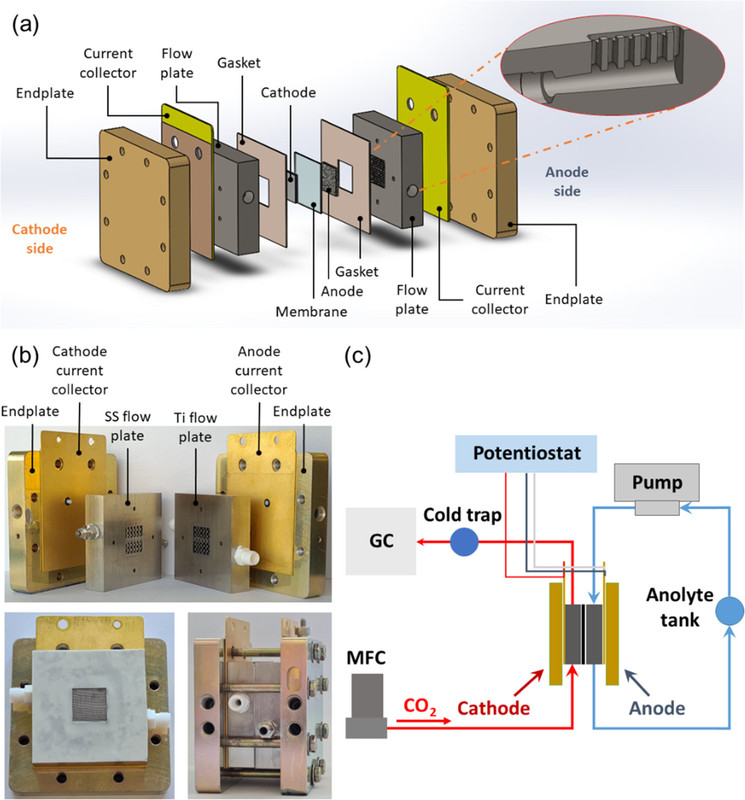

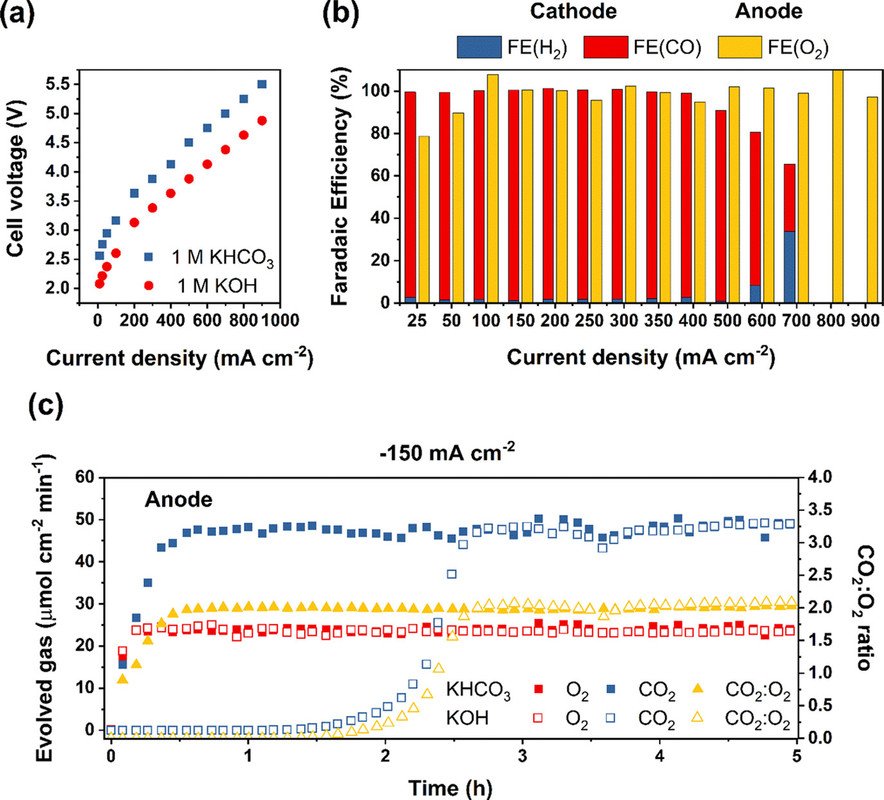

Herein, we aim to elucidate the charge transport through the AEM in a zero-gap flow electrolyzer in 2e– processes. To this end, we present a detailed description of the mass balance for CO2-to-CO and hydrogen evolution reaction (HER) up to 900 mA cm–2 on carbon-supported silver (Ag/C) and various non-CO2RR (Pt/C, IrO2/C, and NiFeCo/Ni) GDEs using KOH and KHCO3 anolytes. We recorded the reactant flow rate depression, the O2 and CO2 evolution at the anode, and the anolyte pH change during electrolysis. The dominant charge carriers were identified at a wide range of operational parameters (current density, CO2 feed rate and partial pressure, and different anolytes and catalysts), and a variation in the chemical nature of the transported ionic species was found. This in turn implies differences in the non-electrochemical CO2 consumption at the cathode/membrane interface through unidentical CO2 hydration conditions in MEA-type zero-gap electrolyzers...

Two figures from the paper:

The caption:

The caption:

It is important to note that what is being discussed is Faradaic efficiency as opposed to Thermodynamic efficiency, which is much lower. The former refers to the utilization of electrons to generate the product, the latter to the waste of energy that the process involves, that is exergy destruction. (In electrochemical papers this is usually discussed in terms of overvoltage, the difference between the voltage required by the Nerst equation and that required for the reaction to actually take place in the real world, that is theoretical vs. observed.)

The mixture of CO and hydrogen is known as "syn gas" from which any important commodity currently obtained from dangerous petroleum can be synthesized, along with portable fuels vastly superior to say, gasoline and/or diesel fuel and/or kerosene (jet fuel).

Have a nice Sunday afternoon.

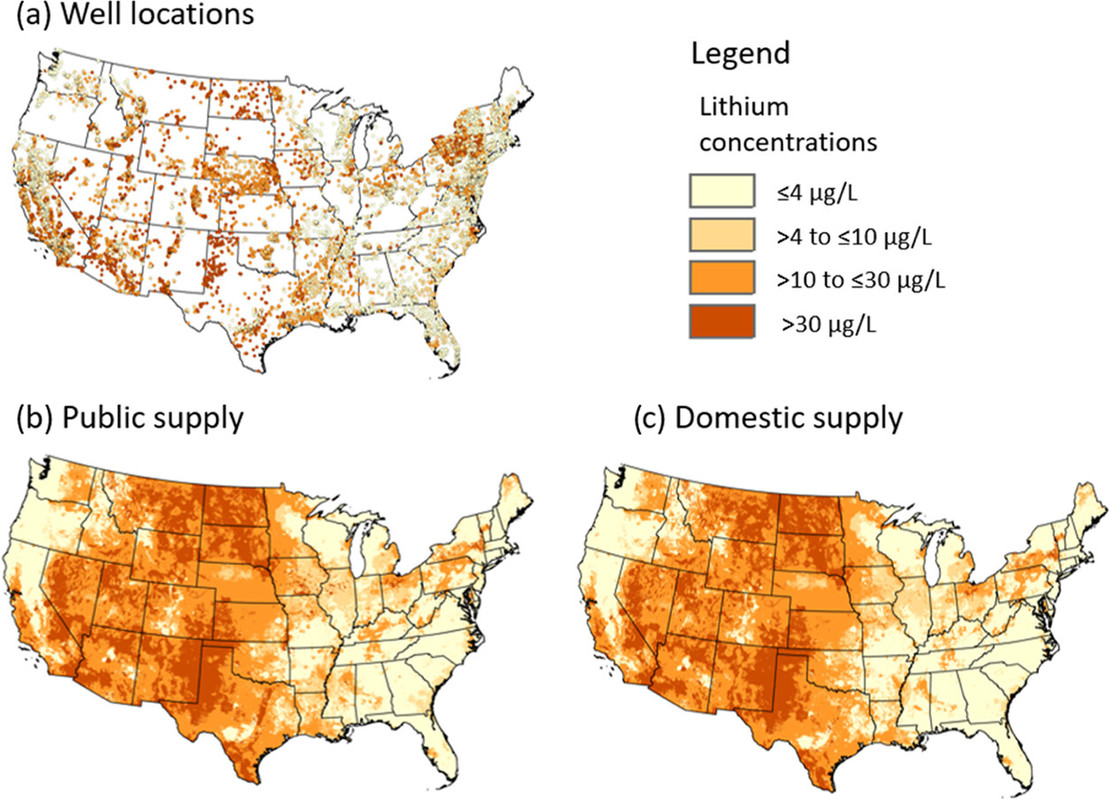

Lithium Concentrations in Groundwater Used as Drinking Water for the Conterminous United States.

The paper to which I'll refer in this post is this one: Estimating Lithium Concentrations in Groundwater Used as Drinking Water for the Conterminous United States Melissa A. Lombard, Eric E. Brown, Daniel M. Saftner, Monica M. Arienzo, Esme Fuller-Thomson, Craig J. Brown, and Joseph D. Ayotte Environmental Science & Technology 2024 58 (2), 1255-1264

The article is open access for the public to read, nevertheless I'll offer a brief excerpt for convenience.

From the introduction of the paper:

A graphic from the paper:

The caption:

No comment, other than that figure 6 in the paper, if one opens it, is interesting.

Dealing with Chlorine in the Electrolysis of Seawater to Make Hydrogen: Discussion of an Approach.

The paper to which I'll refer in this post is this one: Reducing Chloride Ion Permeation during Seawater Electrolysis Using Double-Polyamide Thin-Film Composite Membranes Xuechen Zhou, Rachel F. Taylor, Le Shi, Chenghan Xie, Bin Bian, and Bruce E. Logan Environmental Science & Technology 2024 58 (1), 391-399.

There is a widespread belief in our culture that hydrogen is "green," which is based on the fact that its combustion product is water. This belief is rather equivalent to the belief that Jesus is going to appear at a revival meeting in Mississippi, wrapped in an American flag, updating turning water into wine by turning Trump signs into AK-47s and inspiring the crowds to go out and shoot gays, and nonwhite people with their new weapons while requiring every woman to have a forced birth of nine or ten children and appointing Donald Trump as his personal representative on Earth.

Hydrogen is a dirty fuel. A Giant Climate Lie: When they're selling hydrogen, what they're really selling is fossil fuels.

I cite this particular paper, which I do not have time to discuss in great detail, because like every other "hydrogen by electrolysis using so called "renewable energy" paper published in these troubling times where wishful thinking substitutes for reality, it starts with reality, and then ignores it with a bunch of "could" statements substituting for "is" or "are" statements.

I'll comment briefly below on the parts I will put in bold from the introduction and then make a brief statement of why I think that the paper, despite it's "could" rather than "is" or "are" focus or foci, is worth considering for what might be a better world than the one in which we live. From the introduction:

An asymmetric water electrolyzer configuration has recently been proposed that uses fully oxidized salts (e.g., sodium perchlorate) as contained anolyte and seawater as the catholyte to prevent chlorine evolution. (13) Low-cost (less than $10 m–2) (13) polyamide (PA) thin-film composite (TFC) reverse osmosis membranes were used instead of more expensive cation exchange membranes ($250 to $500 m–2) (13) commonly used in acidic water electrolyzers to control the ion exchange between the catholyte and anolyte. (13,14) These PA TFC membranes exhibit high resistance to salt permeation due to steric exclusion. (15,16) Thus, applying TFC membranes to seawater electrolysis helps to limit the passage of chloride ions (Cl–) into the anolyte and the leakage of the anolyte. Water ions, H+ and OH–, can easily transport through TFC membranes, which can decrease the membrane charge transfer resistance. (17,18)

One major concern is that the small amount of Cl– ions passing through TFC membranes during seawater electrolysis still needs to be decreased to minimize chlorine generation. (13,19) Another concern is the energy loss due to the generation of a pH gradient when using TFC membranes and salty water. During electrolysis, H+ ions are generated in the anode and OH– ions are produced in the cathode. Although TFC membranes favor the transport of water ions over salt ions, some cross-membrane charge is still carried by salt ions, increasing the concentrations of H+ in the anolyte and OH– in the catholyte. This pH difference can contribute to the voltage increment during electrolysis, referred to as the Nernstian overpotential. (20,21) Using a membrane with a more selective PA active layer with negative charge was recently shown to moderately reduce the permeation of Cl– ions but have little effect on the transport of counterions (Na+) during electrolysis. (22) The Nernstian overpotential remained nearly constant and thus did not further decrease the energy demands. Therefore, other methods are needed to further reduce Cl– ion transport in TFC membranes, ideally along with the deceleration of Na+ ion permeation.

In this study, we examined the use of a double-polyamide TFC membrane structure for reducing the permeation of chloride ions during water electrolysis (Figure 1). It was hypothesized that the negatively charged polyamide facing the alkaline catholyte would decrease the transport of negatively charged Cl– ions into the anolyte, and the positively charged polyamide facing the acidic anolyte would reduce the transport of Na+ ions into the catholyte. Hence, having a polyamide layer on both sides of the membrane would favor the transport of water ions due to their higher permeability in the PA layer. In addition, the rapid water ion transport can be maintained through very fast water ion association to form water molecules.

Unstated in the first bolded ( "is" ) statement is that not only does the production of hydrogen generate climate change gases, but it does so by the destroying exergy associated with the original fossil fuels. This means that to use hydrogen as a source of energy - which is actually a trivial Potemkin enterprise being sold by fossil fuel salespeople here at DU and elsewhere - means more reliance on dangerous fossil fuels, not less.

These sorts of papers often report Faradaic efficiencies - the utilization of electrons - of greater than 90% - although this paper doesn't refer to Faradaic efficiency at all, but bury the reality of the very different thermodynamic efficiency by discussing it as "over voltage." The thermodynamic efficiency of electrolysis is roughly 60%, meaning that 40% of the potential exergy is destroyed in making hydrogen via electrolysis.

Now let's talk about the energy content of the world supply of hydrogen connected with the "by 2030" soothsaying, even though hydrogen is overwhelmingly used as a captive chemical reagent largely in ammonia synthesis followed by oil refining. The energy content of hydrogen can be taken to be around 120 MJ/kg. Thus the energy value of all the hydrogen on the planet that is supposed to be in demand "by 2030" is about 13.9 Exajoules, as of 2022 according to the 2023 World Energy Outlook. The same document reports that the combined solar and wind on the entire planet - infrastructure with a 25 year lifetime roughly built at a cost of trillions of dollars - was about 15 Exajoules, this on a planet consuming 634 Exajoules of energy. Thus the expectation that hydrogen "could" be made with so called "renewable energy" would require nearly all of it produced in an insane orgy of cheering for this stuff while dependence on dangerous fossil fuels gets worse and worse.

Now the positives associated with the paper. I personally believe that we should - we don't but we "could" or "should" - rely on nuclear energy as our sole source of primary energy, and in so doing, should utilize process intensification to raise the exergy recovery from the rather abysmal 33% realized by most light water reactors in the world, to a more sustainable 60% percent or higher. (100% is thermodynamically impossible, which every high school graduate should know, but likely doesn't know.)

Under these conditions, there will be a lot of excess electricity available that can be diverted, even given the fact that electricity is thermodynamically degraded, to electrolysis of seawater. I have spoken favorably many times of the work of the Naval Research scientist Heather Willauer whose work focuses on making jet fuel (for aircraft carriers) from seawater where the source of carbon is dissolved carbon dioxide and carbonate ions in seawater, where the volumetric and mass content is much higher than that of air, with which it is obviously in equilibrium.

This is a pathway to direct air capture of CO2, thus closing the carbon cycle, something proposed back in 2011 by the late great Nobel Laureate George Olah.

Anthropogenic Chemical Carbon Cycle for a Sustainable Future George A. Olah, G. K. Surya Prakash, and Alain Goeppert Journal of the American Chemical Society 2011 133 (33), 12881-12898)

Of course, this is in the realm of "could." I'm not optimistic in the age of celebration of lying to ourselves and to each other that this "could" outcome is likely, but it exists.

Have a pleasant Sunday.

Profile Information

Gender: MaleCurrent location: New Jersey

Member since: 2002

Number of posts: 33,516